The S3 at Scale Runbook

Detecting and fixing cardinality explosions in production buckets

In the previous article - How a simple S3 design decision turned into a $7M cost - we analysed a production system that accumulated 1.56 trillion objects in a single S3 bucket. The architecture scaled perfectly from a functional perspective — but nearly triggered a $7.2M lifecycle transition event.

The root cause was not storage volume. It was object cardinality.

This article is the operational companion: a runbook for diagnosing and fixing small-object explosions in production S3 systems.

The Operational Model

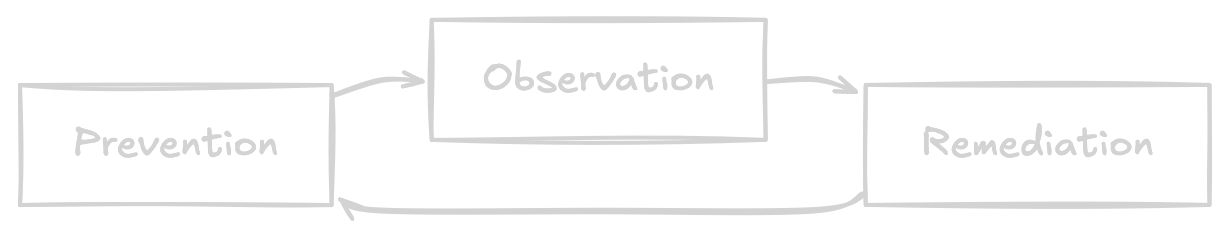

Operating S3 at scale follows a simple lifecycle:

Most large S3 cost failures occur because observation is missing.

Quick Triage

When S3 costs increase unexpectedly, start with one simple check.

If this number becomes too small, the system is accumulating fragmented artifacts.

Rule of thumb

> 10 MB → Healthy

1–10 MB → Acceptable

< 1 MB → Fragmentation risk

< 128 KB → CRITICAL128 KB matters because:

by default, lifecycle rules do not apply to objects sized ≥128KB

some storage classes bill small objects as if they were 128 KB minimum

Once the average object size drops below this boundary, the system shifts into object-dominated pricing behaviour.

Diagnosis

Step 1 — Check bucket metrics

The quickest way to detect a cardinality issue is to compute average object size directly from CloudWatch metrics.

CloudWatch already exposes the required metrics:

AWS/S3 BucketSizeBytes

AWS/S3 NumberOfObjects

CloudWatch Dashboard Widget

Paste the following JSON into a CloudWatch dashboard (Source view) and replace the bucket name.

{

“metrics”: [

[ “AWS/S3”, “NumberOfObjects”, “BucketName”, “example-bucket”, “StorageType”, “AllStorageTypes”, { “id”: “m1”, “stat”: “Sum”, “label”: “objects”, “visible”: false } ],

[ “.”, “BucketSizeBytes”, “.”, “.”, “.”, “StandardStorage”, { “id”: “m2”, “yAxis”: “right”, “label”: “size”, “visible”: false, “stat”: “Maximum” } ],

[ { “expression”: “(m2/m1) / 1024”, “label”: “average object size (KB)”, “id”: “e1” } ]

],

“view”: “timeSeries”,

“stacked”: false,

“region”: “us-east-1”,

“period”: 86400,

“stat”: “Average”

}This widget will compute average object size and display it in kilobytes.

This is the single most useful metric for detecting S3 cost drift.

If the graph trends downward toward 128 KB, the bucket is likely accumulating fragmented artifacts faster than storage volume is growing.

Step 2 — Verify lifecycle eligibility

Average object size tells you that fragmentation exists.

The next step is determining whether lifecycle remediation will actually work. This requires analysing the distribution of object sizes.

Why this matters:

Many Glacier storage classes do not transition objects smaller than 128 KB by default (but can be explicitly configured to do so).

If most objects fall below this threshold, either lifecycle transitions won’t be triggered with default behaviour, or risk incurring large costs if configured for smaller object sizes.

Example Athena query

Using S3 Inventory:

SELECT

CASE

WHEN size < 32768 THEN ‘<32KB’

WHEN size < 131072 THEN ‘32KB–128KB’

WHEN size < 1048576 THEN ‘128KB–1MB’

ELSE ‘>1MB’

END AS size_bucket,

COUNT(*) AS object_count

FROM s3_inventory_table

GROUP BY 1

ORDER BY 1;Example output:

<32 KB : 420B objects

32KB–128KB : 230B objects

128KB–1MB : 50B objects

>1MB : 20B objectsInterpretation:

→ ~650B objects below 128 KB

→ lifecycle transitions will be largely ineffectiveIn this scenario, lifecycle transitions would generate massive transition costs while producing minimal storage savings.

Monitoring

Once the issue is diagnosed, implement continuous monitoring. The same average object size metric applies.

Tracking this value over time provides an early signal of fragmentation long before cost increases appear on billing dashboards.

Alerting

A practical alert should trigger when: AverageObjectSize < 128 KB for three consecutive days.

Requiring multiple evaluation periods avoids alerts caused by temporary ingestion spikes or batch jobs.

AWS CDK example

const bucketName = 'your-bucket-name';

// Core S3 metrics

const objects = new cloudwatch.Metric({

namespace: 'AWS/S3',

metricName: 'NumberOfObjects',

dimensionsMap: { BucketName: bucketName, StorageType: 'AllStorageTypes' },

statistic: 'Sum',

period: Duration.days(1),

});

const bytes = new cloudwatch.Metric({

namespace: 'AWS/S3',

metricName: 'BucketSizeBytes',

dimensionsMap: { BucketName: bucketName, StorageType: 'StandardStorage' },

statistic: 'Maximum',

period: Duration.days(1),

});

// Average object size = bytes / objects

const avgSize = new cloudwatch.MathExpression({

expression: 'bytes / objects',

usingMetrics: { bytes, objects },

label: 'avg object size (bytes)',

});

// Alert if <128 KB for 3 days

new cloudwatch.Alarm(this, 'S3CardinalityAlarm', {

metric: avgSize,

threshold: 128 * 1024,

evaluationPeriods: 3,

datapointsToAlarm: 3,

comparisonOperator: cloudwatch.ComparisonOperator.LESS_THAN_THRESHOLD,

});Remediation

Once fragmentation is confirmed, remediation must be planned carefully. At large scale, fixing the dataset can itself be expensive.

Step 1 — Estimate lifecycle transition cost

Lifecycle transitions are priced per 1,000 objects. Estimate remediation cost before enabling transitions:

Example:

objects = 720_000_000_000

price_per_1000 = 0.01

transition_cost = (objects / 1000) * price_per_1000

print(f”${transition_cost:,.0f}”)Output:

This calculation prevented a multi-million-dollar lifecycle event in the system analysed in the previous article.

Step 2 — Execute remediation safely

Avoid custom scripts for billion-object operations.

Instead use S3 Batch Operations, which provides:

automatic parallelisation

retry handling

distributed execution across AWS infrastructure

Batch Operations is designed specifically for large-scale object-level changes.

Prevention

If the system is still evolving, implement patterns to prevent future cardinality explosions.

Aggregate small artifacts

Bad pattern: request → write 200 objects

Better pattern: request → buffer → write 1 aggregated object

If artifacts are generated in the KB range, introduce a buffering layer before writing to S3.

Design operational prefixes

Structure buckets around operational boundaries.

Example:

s3://bucket/

service-a/

service-b/

telemetry/

snapshots/Benefits:

targeted lifecycle rules

efficient Athena queries

easier remediation

Prefer metadata for read-time inspection

If object attributes must be inspected during reads: Use object metadata.

Use tags primarily for:

lifecycle rules

governance policies

Metadata avoids additional API calls when reading large numbers of objects.

Operational Checklist

When S3 costs spike unexpectedly

1️⃣ Check object count

2️⃣ Compute average object size

3️⃣ If <128 KB → fragmentation detected

4️⃣ Verify lifecycle eligibility (size distribution)

5️⃣ Estimate remediation cost

6️⃣ Use Batch Operations for large-scale fixes

Conclusion

The system analysed in the previous article scaled perfectly. It simply became economically unstable.

At petabyte scale, object storage is no longer purely a storage problem. It becomes a cardinality management problem.

Treat object count as a first-class operational metric.

Otherwise, a perfectly functioning system can quietly drift into a multi-million-dollar bill.