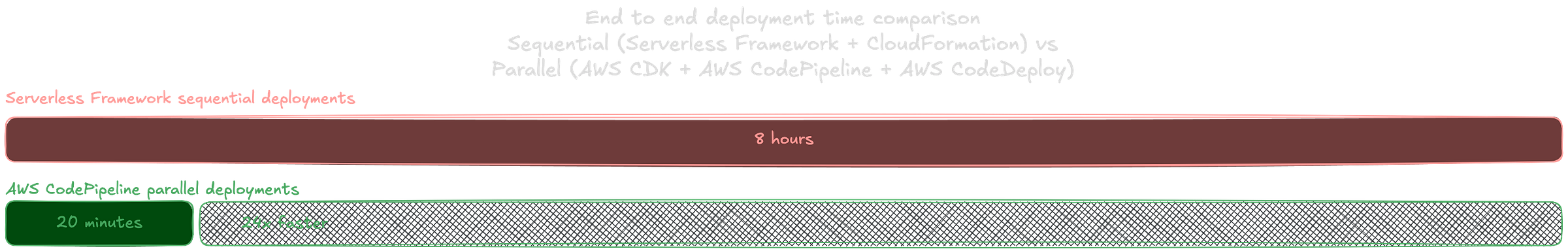

From 8 Hours to 20 Minutes: Deploying an MVP to Production

Why we spent 8 hours babysitting CloudFormation and how we automated our way out

This is an engineering case study from a project I was part of around 2021–2022. At the time, I was working at a software consultancy and was placed with a large Australian tertiary education solutions provider to help take an existing system from a working MVP to something that could operate reliably in production. The system itself was already functional, but the way it was built, deployed, and operated had not yet caught up with that reality.

The System

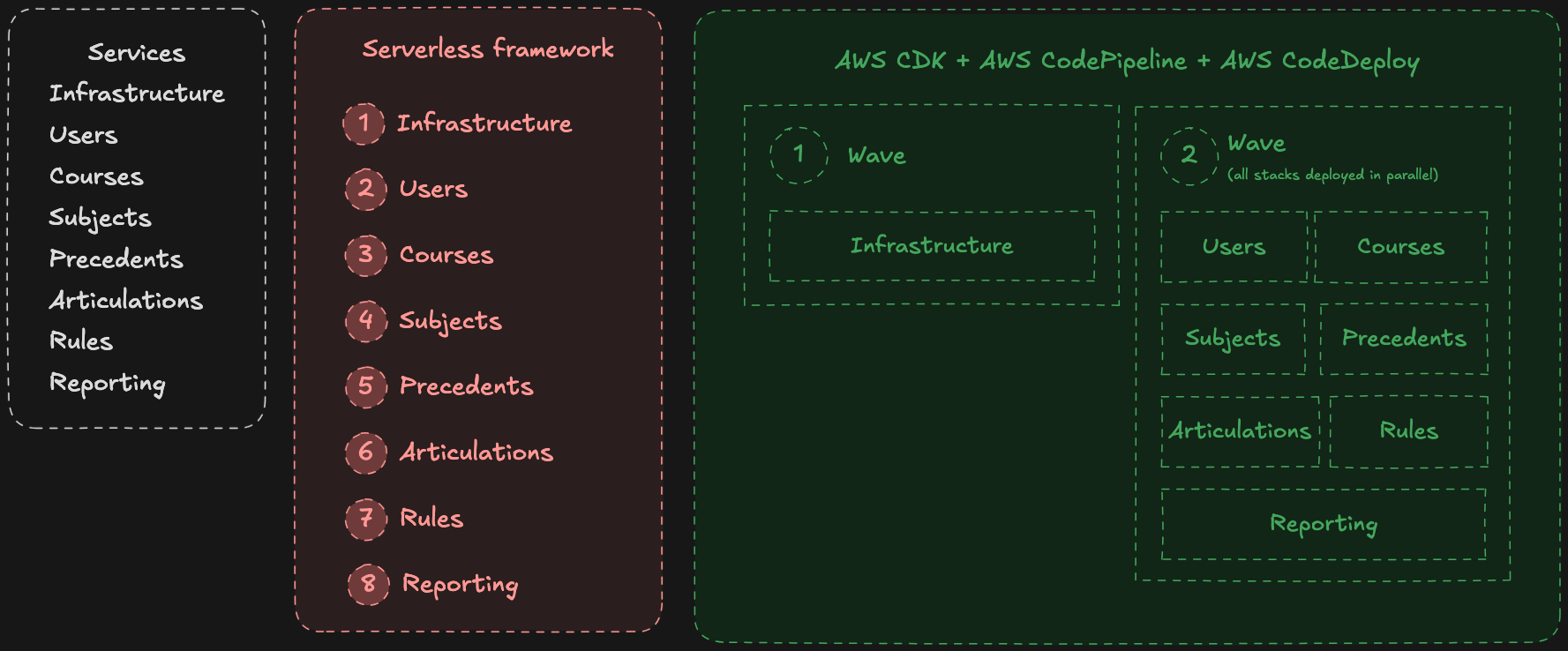

The system was a credit management platform for the tertiary education sector, designed to support application assessment, articulation rules, subject matching, and reporting workflows across institutions.

From an architectural perspective, it was already decomposed into independent domains. The system consisted of seven micro services alongside a shared infrastructure stack. Each service owned a specific area of the platform — users, courses, subjects, precedents, articulations, rules, and reporting — with roughly 15–20 Lambda functions per service.

The team consisted of eight engineers, and the system itself was functional. The focus was shifting from an MVP that answered ‘does it work?’ to a production-ready system that could be operated reliably.

That shift was the catalyst for unearthing multiple operational problems.

What Was Inherited

The infrastructure was built using the Serverless Framework, defined in YAML, and deployed via CloudFormation. The application code was written in JavaScript and bundled using Webpack.

Deployments were executed manually via the Serverless CLI, stack by stack, and the entire team worked in a single shared development environment.

These choices were reasonable for an MVP, but they didn’t scale well with the system or the team.

The Problems

Day-long deployments

Deployments took between six and eight hours.

They were run manually, in sequence, with each stack deployed one after the other. Even services that had no dependency on each other were forced into a linear deployment order.

In practice, a deployment looked like this: the lead engineer would trigger a CLI deploy, then sit and watch as each Lambda was built and packaged one by one. This would eventually roll into a CloudFormation stack update, where the deployment progressed resource by resource and required monitoring throughout. A failure partway through would trigger an automatic rollback, after which the entire process had to be run again.

Each stack took roughly 45–60 minutes to complete. Once one finished, the process was repeated for the next, and then the next, until all services had been deployed.

Because of the duration and the way deployments behaved end-to-end, supervision was required. In practice, this meant that once a week, the project’s lead engineer would spend an entire day running a production deployment and monitoring it throughout.

Slow bundling

The application was bundled using Webpack.

Each Lambda function triggered a full build of the entire project. There was no build caching in place, and builds were repeated across functions even when most of the code was shared.

This meant that a large part of the deployment time was not spent deploying — it was spent rebuilding the same codebase multiple times.

Bundling tools have improved significantly since, particularly with caching and incremental builds. But in this setup, the build process amplified the cost of every change.

Stepping on each other’s toes

All development and testing happened in a single shared environment.

Engineers deployed changes into the same stacks and tested on top of each other’s work. Even unrelated changes could interfere, simply because they shared the same runtime environment.

Testing became coupled to timing. Engineers had to coordinate deployments, wait for others to finish, and occasionally re-test work that had been affected by someone else’s changes.

No CI/CD

As previously mentioned in Day-long deployments, deployments were entirely manual.

There was no pipeline, no automation, and no abstraction over the deployment process. Every release required someone to be present, run commands, and monitor the outcome.

This increased both the time cost and the cognitive load of deployments.

Infrastructure as static templates

Infrastructure was defined as YAML templates.

While this worked for defining resources, it limited how infrastructure could be structured and evolved. Relationships between components were implicit, and changes required careful coordination across files.

A Visual Summary

On paper, the system was composed of micro-services. During deployment, it behaved as a monolith.

The rest of this article focuses on how we moved from the structure on the left to the one on the right.

The Operational Re-vamp

To summarise the decisions taken, we made a set of foundational changes to how the system was built and deployed:

Migrated infrastructure from the Serverless Framework to AWS CDK

Replaced Webpack with esbuild for faster, incremental builds

Standardised on TypeScript across both infrastructure and application code

Introduced an AWS CI/CD pipeline using CodePipeline and CodeDeploy to orchestrate and execute deployments

Introduced a custom local deployment command to compile, package, and deploy individual Lambdas using esbuild and the AWS CLI, providing the same functionality as the Serverless deploy command with significantly reduced execution time

These changes established the baseline that allowed the deployment model to be restructured.

From day-long deployments to parallel waves

By leveraging AWS CodePipeline, deployments were restructured into two waves.

The first wave handled shared infrastructure. Once complete, all service stacks were deployed in parallel. Independent services were no longer forced into a sequential deployment order.

This removed a significant portion of idle waiting time during deployments.

From full rebuilds to incremental builds

Migrating from Serverless to AWS CDK allowed us to remove our dependency on Webpack and adopt a faster bundling approach using esbuild.

Builds became incremental, shared code was reused, and unchanged components were no longer recompiled on every deployment. Instead of rebuilding the entire system repeatedly, only what changed was rebuilt.

This reduced build times from hours to minutes.

It’s worth noting that Webpack itself was not the bottleneck here. While it had already introduced support for caching and incremental builds, our setup relied on a Serverless Framework plugin that had not yet adopted those capabilities. In practice, this meant full rebuilds were still occurring on every deployment.

From shared environment to sandboxed development

Sandbox environments were introduced for development.

Each engineer could deploy their own version of the Lambda functions while still pointing to shared infrastructure. This allowed changes to be tested in isolation without affecting others.

Development became parallel. Engineers no longer needed to coordinate to validate their work.

From manual execution to CI/CD

Deployments were moved into an automated pipeline orchestrated by AWS CodePipeline.

CodePipeline was configured with a source stage connected to our GitHub repository, triggering the pipeline on code changes. From there, it coordinated the flow through build and deployment stages, handing off to CodeDeploy for execution.

Releases could be initiated without manual intervention, and the need for supervision was removed. Roll outs became repeatable and consistent.

From templates to programmable infrastructure

Infrastructure was redefined using a programmatic approach.

This allowed explicit modelling of components, clearer structure, and reuse of logic. Infrastructure became part of the system, rather than a separate configuration layer.

The Result

Deployments went from around eight hours down to approximately twenty minutes.

Individual Lambda updates went from ~2 minutes using the Serverless CLI to under thirty seconds with a custom local deployment command.

Full system deployments no longer required supervision.

Engineers could test changes independently without interfering with each other.

The most important improvement was not just speed. It was the removal of unnecessary coupling — in builds, in deployments, and in how the team worked.

A Balanced Take

The original tooling was not incorrect. It was well-suited for getting the system to a working state quickly.

As the system grew, the requirements changed. More control was needed over how deployments were structured, how builds were executed, and how engineers interacted with the system.

Many of the limitations encountered here may be addressed differently today with newer tooling and improved workflows. But the underlying question remains:

Should the way a system is deployed reflect how it is structured?

The system itself did not change dramatically. The services were already there. The boundaries already existed. What changed was how the system was operated.

By the end, deployments were fast, automated, and no longer required supervision. Engineers could work independently without being blocked by the environment or each other.